LatentMesh

The evidence plane for production AI.

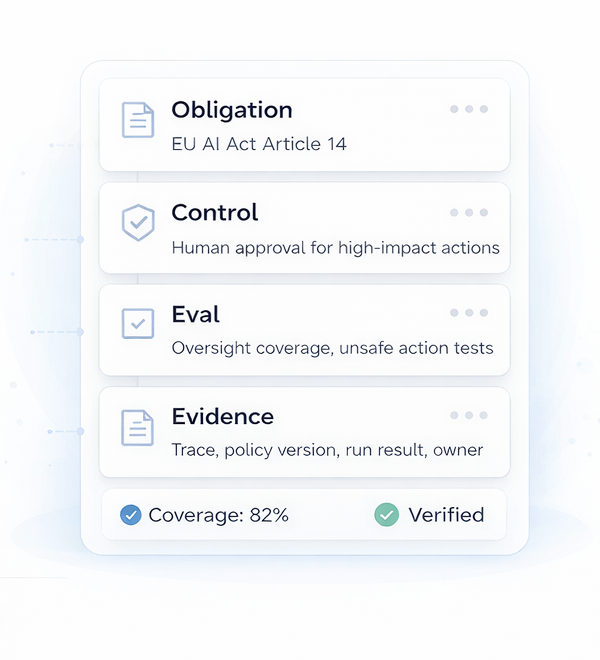

From obligation to proof: how regulatory obligations become controls, evaluations, evidence, and incident response.

By Karthik Mahalingam. Engineering leader at Microsoft, working on Purview Data Lifecycle Management: retention, compliance, and governance services for large enterprise environments.

What I write about

AI compliance

How regulatory obligations become engineering controls, evaluations, and audit-ready evidence.

Start with the AI Compliance guide →Agent failures

How agent systems break, drift, and misbehave once they leave the demo environment.

Evaluation

Practical evals for regression, reliability, and operational trust in production.

Safety

Guardrails, control surfaces, observability, containment, and human review loops.

Featured series

All series →Reliable Agent Systems

How to design, test, and operate individual agent systems reliably.

Start here if you are building one agent, workflow, or AI system and need to understand how it fails, how to evaluate it, and how governance becomes operational.

Read the series → New seriesEvaluating Agent Fleets

How to allocate limited evaluation capacity across many agents, classes, workloads, and changes.

Start here if your problem is no longer one agent. This series is about fleet-scale evaluation: work units, baseline inheritance, trigger-routed re-evaluation, sampling, eval packs, and evidence.

Read the series →Latest essays

View all →Stay in the loop

A weekly note on building reliable AI systems: governance, evals, controls, and compliance.

No spam. Unsubscribe anytime.